Introduction

At Indigo, we have been investigating the use of Docker Containers for over 2 years. Given the recent development with Docker tooling, it is easier than ever to get started and operate containers. Leveraging containers can provide a lot of flexibility and efficiency but they also require new approaches to configure and run our services.

With ForgeRock’s recent developments in DevOps and industry’s interest in Docker, we felt it was a great time to share our internal work to embrace this new paradigm.

As we started our investigation on Docker, we quickly realized it could be a great tool for experimentation, proof of concepts and potentially change the way we collaborate on our various projects. Our work now provides a functional demonstration of some of ForgeRock salient features.

When designing our Docker stack, we wanted to align as closely as possible to the modern container deployment architecture while integrating our deep knowledge of the ForgeRock components. Through multiple iterations, we have refined our approach. The result is an easy to use, fully automated integration of the following products: OpenAM, OpenDJ, OpenIDM and OpenIG.

Our stack is ideal for quick POC, it provides a platform that any organizations can leverage to rapidly configure and deploy solutions without standing up large infrastructures.

In this three-part series, we will cover topics that address the design, operationalization and security of our docker deployment.

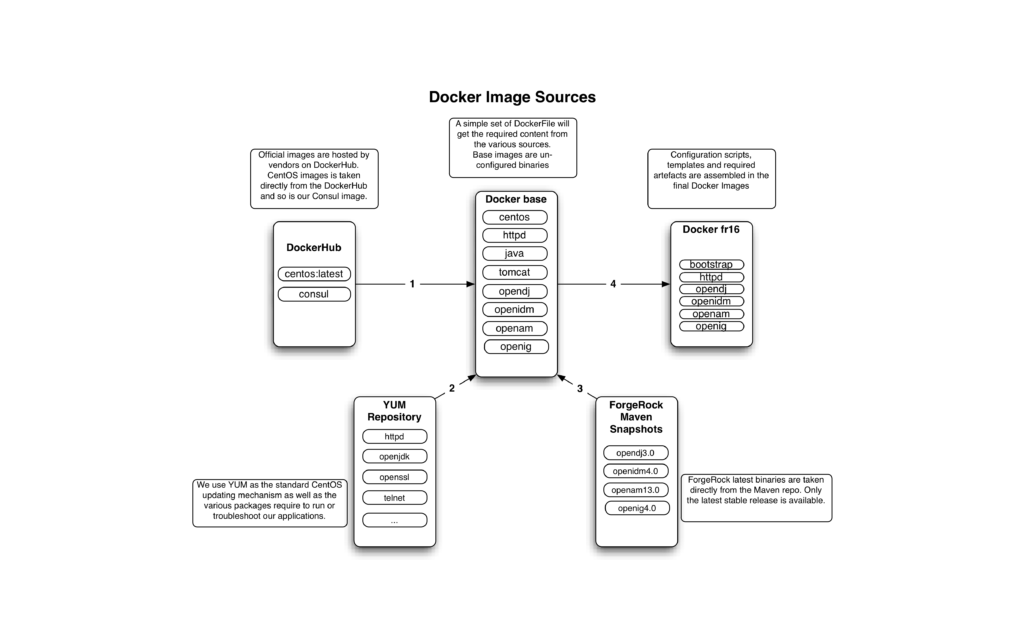

Image Structure

In order to fully utilize docker and the matching infrastructure, we built an image structure that attempts to limit data transfers and simplify maintenance overhead. See AUFS storage for more background on how image layers work.

We have created two classes of images, the “base” contains all the required binaries but no configuration. As modifications to these files are infrequent, we minimize the rate at which we download third party binaries. One tradeoff is that changes to these images necessitate rebuilding all the cascading images below.

The “fr16” images are built on top of the base image. This gives us consistency as we control the whole image pipeline. It also limits re-downloading image layers as they are shared throughout fr16 images. These images contain configuration templates and scripts that will be executed when we start the containers, allowing us to create different environments as we see it fit.

Figure 1 below, shows an overview of our “fr16” images:

Figure 1: Docker Image Sources

Immutability

Container images are meant to be portable, widely distributed and reusable when possible. To achieve these goals, we must remove any specific configuration that are not constant across environments.

Our strategy for image immutability is to create templates of all configurations that contain variables. For now, we treated the following attributes as variables:

- Domain

- LDAP Suffix

- Admin password

They provide a good starting point to provide an environment agnostic platform. As we keep refining our work, we hope to expend this to cover other variables as we see the need.

When these images are turned into a running container, they will connect to a Key/Value distributed store (running in a separate container) and will retrieve the values required to turn templates into configuration files.

Persistence

Generally speaking, containers are well suited for 12-factor apps and as a result The ForgeRock stack has many components that do not fully align with this stateless paradigm. This includes components such as OpenDJ, OpenIDM and OpenAM.

We address the need for persistence in these components by leveraging Docker Volumes. By placing all our configuration and user account data in these purpose built volumes, we ensure any modifications are persisted across container restart providing a great solution for our stateful containers.

Orchestration

There are many benefits in packaging each component to be confined within a container but it does create some challenges; Orchestration is needed to ensure each component is started and configured in the right sequences.

We currently use docker-compose to ensure the various dependency are started for a given container. This allows us to capture the stack dependency into a simple YAML file and have docker-compose take care of bringing up the required components.

This mechanism ensure the containers are brought up in the right sequence but it is not aware of our applications state. We address application dependency in the following section.

Discoverability

As discussed in the previous section, having containers started in the right sequence does not ensure our application dependencies are met. Each application must be able to discover the current state of its dependencies.

We have designed a mechanism that leverage our K/V store to ensure dependencies are configured before attempting to configure itself. This allows application to be made aware of their surrounding and can be extended to allow clustering, expansion and shrinking.

By looking for specific flags in our K/V store, we can discover and orchestrate the configuration of the complete stack, from the ground up. For example, our OpenAM instance will not begin it’s configuration process until OpenDJ is fully configured.

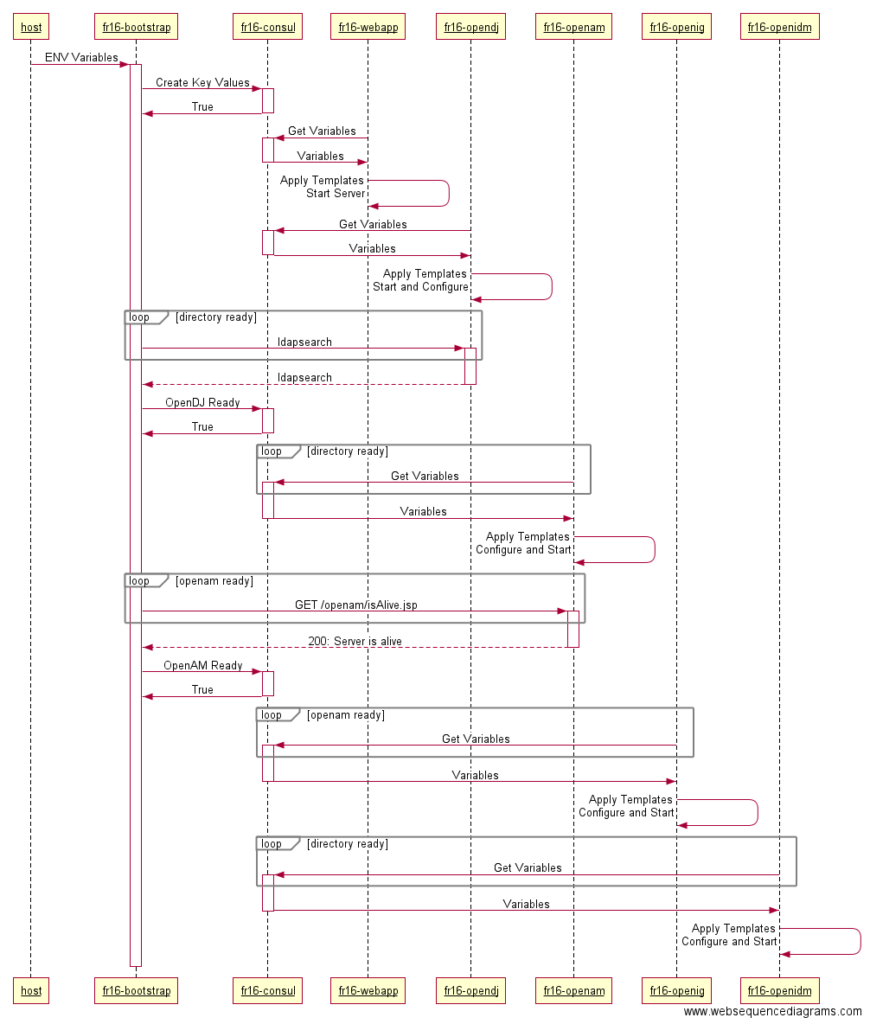

Bootstrapping

As previously highlighted, we have created immutable images that do not contain any variables. When these images are ran, they will become docker containers and will contain multiple templates that must be converted into configurations.

To convert our templates, we must provide a consistent approach to supply variables to our container at runtime. It is possible to pass environment variables to every container; but this approach created a lot of redundant declarations and was tedious to maintain.

We created a specialized ephemeral container that is responsible for taking a set of environment variables and bootstrapping these values in our K/V store. This allowed us to have a single container that is aware of environment variables, further simplifying our configuration.

This same container will also be responsible for validating our components were started and configured. On success a marker is added to our K/V store indicating this service is ready. Our centralized process allows components to be aware of the status of their dependencies by validating this flag before attempting configuration.

Below is a slightly simplified view of the process where fr16-consul acts as our K/V store.

Figure 2: Bootstrap sequence

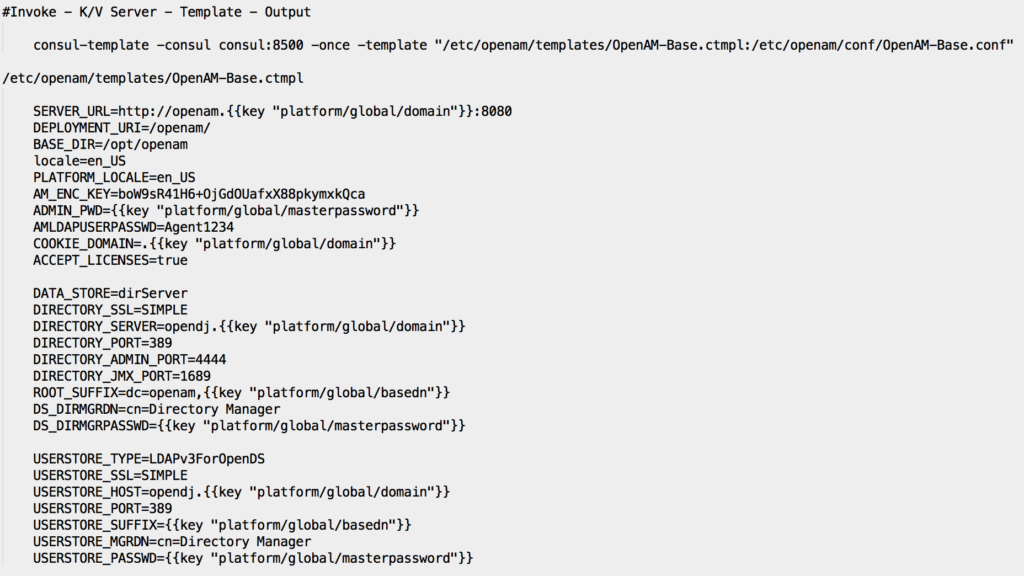

Templates

One of the great benefit of using a distributed Key/Value store is that many of them also offer a convenient way to populate values into a template. In our case, Consul offers a simple and robust application called consul-template built for this exact purpose.

To leverage this tool, you simply replace your variables with the right syntax, make sure your template daemon is configured to use your K/V server as his source. With a single command, you can convert templates into configuration files cleanly and efficiently.

Below, a sample template and associated command used for OpenAM base configuration:

Figure 3: OpenAM Configuration Template

Conclusion

In this entry, we covered our vision and design principle behind our effort to cleanly and efficiently deploy the ForgeRock components on Docker.

We are planning two additional entries to cover how to operate as well as secure our Docker deployment. So stay tuned for additional details!

In the mean time, we are pleased to share our efforts with the community and you can find the links to the resources below.

Resources

Source Code of our Stack:

https://bitbucket.org/nseigneur/indigolabs/src

Built images on DockerHub:

https://hub.docker.com/u/indigolabs/